New priority-based round-robin scheduler

11 May 2026

Today I’d like to show you, how I’ve added priorities to MOP3’s round-robin scheduler!

Code to follow along:

Motivation

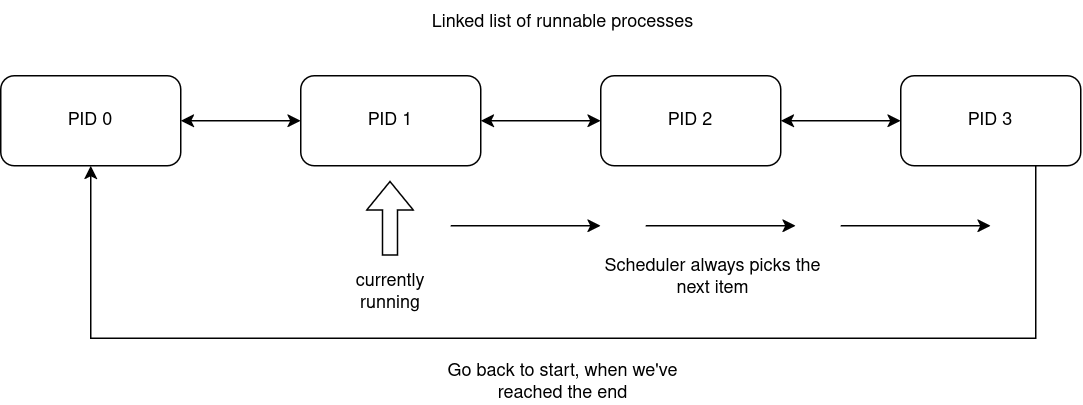

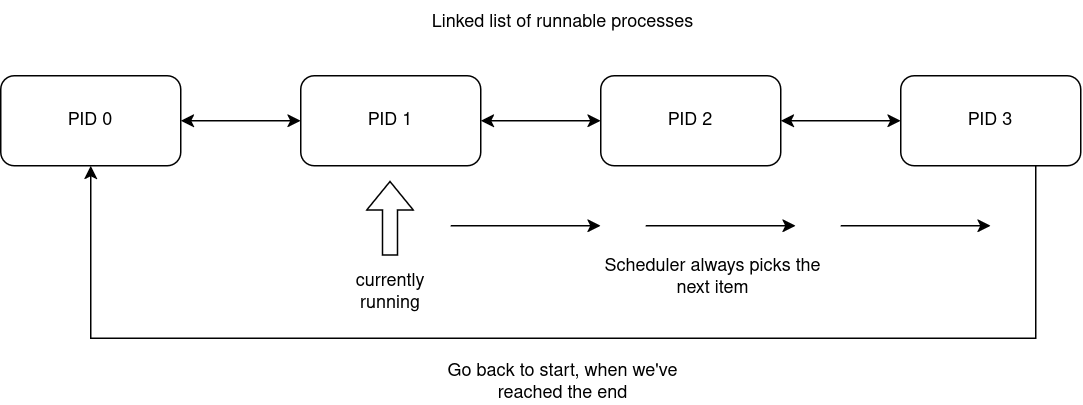

So far MOP3 was fine with a basic round-robin scheduler. If you don’t know, a round-robin scheduler is a scheduler that has a list of runnable processes and picks the next item from it to execute, but when the end is reached, the algorithm wraps around and starts from the first item.

Simple flow graph:

The issue

Round-robin is simple and easy to implement but quickly becomes insufficient as the operating system grows in features, subsystems and drivers, which increases the amount of concurrently running apps. By having no priorities, everything equates to the same priority. This means that your text editor, which you’re currently typing characters into is as important for the scheduler as a USB controller daemon/poller, which does actual work only for eg. when a new device is plugged in (which is very infrequent). By having priorities, we can tell the scheduler that this USB poller process can be run less frequently, than this other app and thus reallocate precious CPU time to more important tasks.

The catch

Priorities aren’t so obvious to implement into our scheduler. One major issue is that if there are some apps with higher priorities and an app with a lower priority, the lower one will be "starved" of CPU time - it won’t be scheduled, because there will always be a higher priority task to run.

How do we fix this?

-

Priority aging!

What is priority aging? It’s a technique, where a process has a certain priority, but it’s value "gets rewarded" over time. From the scheduler’s perspective, a process has been waiting for long enough that the scheduler "feels bad" and was to move a process up front.

Modifying struct proc

struct proc {

int pid;

int exec_pid;

struct rb_node_link proc_tree_link;

struct rb_node_link procgroup_memb_tree_link;

struct list_node_link cpu_run_q_link;

struct list_node_link* sq_entries;

struct procgroup* procgroup;

struct proc_platformdata pdata;

uint32_t flags;

struct cpu* cpu;

int state;

uintptr_t uvaddr_argument;

struct proc_suspension_q done_sq;

char name[PATH_MAX + VOLUME_MAX];

char cwv[VOLUME_MAX];

/* ------------------------------------------ */

int priority; // !! <-- base priority

int dynamic_priority; // !! <-- rewarding priority

};We’ve added these two new fields: the base priority number and the rewarding priority number. Our priority system will be reversed if you’re used to UNIX-style priorities, ie. our priorities can be taken at face value. UNIX priorities are: higher number = worse, lower number = better, which is quite counter-intuitive. We’ll be using priority numbers to denote actual priority in a logical sense (more = good, less = bad).

New scheduling algorithm

/* This is our function to figure out the next process to run on a given CPU */

static struct proc*

proc_find_sched(struct cpu* cpu) {

if (!cpu->proc_run_q)

return NULL;

/* first process from our run queue list */

struct list_node_link* current = cpu->proc_run_q;

struct proc* best_proc = NULL;

int highest_prio = -1; /* some very low priority value */

do {

struct proc* proc = list_entry(current, struct proc, cpu_run_q_link);

/* Is process ready? */

/* (We also don't want to run kernel processes, because those are stubs for other subsystems) */

if (proc->state == PROC_READY && !(proc->flags & PROC_KPROC)) {

/* Here we want to first pick the most suffering process */

if (proc->dynamic_priority > highest_prio) {

highest_prio = proc->dynamic_priority;

best_proc = proc;

}

if (proc->dynamic_priority < 100)

proc->dynamic_priority++;

}

/* pick next or wrap */

current = current->next ? current->next : cpu->proc_run_q;

} while (current != cpu->proc_run_q);

if (best_proc == NULL)

return NULL;

if (cpu->proc_current->cpu_run_q_link.next)

current = cpu->proc_current->cpu_run_q_link.next;

else

current = cpu->proc_run_q;

struct list_node_link* start = current;

/* Now we do normal round-robin among processes with highest priority */

do {

struct proc* proc = list_entry(current, struct proc, cpu_run_q_link);

if (proc->state == PROC_READY &&

!(proc->flags & PROC_KPROC) &&

proc->dynamic_priority == highest_prio) {

/* back to base priority */

proc->dynamic_priority = proc->priority;

return proc;

}

/* pick next or wrap */

current = current->next ? current->next : cpu->proc_run_q;

} while (current != start);

return NULL;

}This is it! We’ve just implemented priorities!

User customizability

Now to let the user apps customize their priorities, we add new syscalls: get_priority and set_priority.

/* int set_priority(int prio) */

DEFINE_SYSCALL(sys_set_priority) {

int prio = (int)a1;

if (prio > PRIORITY_MAX)

prio = PRIORITY_MAX;

proc->priority = prio;

return SYSRESULT(ST_OK);

}

/* int get_priority(void) */

DEFINE_SYSCALL(sys_get_priority) { return SYSRESULT(proc->priority); }static syscall_handler_func_t handler_table[] = {

/* ... */

[SYS_SET_PRIORITY] = &sys_set_priority,

[SYS_GET_PRIORITY] = &sys_get_priority,

};Example in the USB poller

In the story at the start of the article, I’ve talked about the USB poller problem. It’s actually the reason I’ve decided to implement priorities into the scheduler. Here’s how we can utilize the new syscalls:

#include <...>

static bool cmdline_poll = false;

static char cmdline_dev[CMDLINE_OPT_VALUE_MAX];

static struct cmdline_opt cmdline_opts[] = {

CMDLINE_OPT("p", "poll", CMDLINE_OPT_VALUE_BOOL, false, &cmdline_poll),

CMDLINE_OPT("d", "device", CMDLINE_OPT_VALUE_STRING, false,

(char*)cmdline_dev),

CMDLINE_END(),

};

static void

usb_poll(void) {

/* PRIORITY_LOW = 5, which is sufficient for system services and background tasks */

set_priority(PRIORITY_LOW);

for (;;) {

int r =

device_do(cmdline_dev, XUSBCTRL_POLL_DRIVER, NULL, NULL, NULL, NULL);

if (r == -ST_NOT_FOUND)

return;

}

}

void

app_main(void) {

if (cmdline_parse(get_cmdline(), cmdline_opts) < 0) {

mprintf("Failed to parse commandline arguments\n");

return;

}

if (cmdline_poll) {

usb_poll();

}

}Reversed priorities

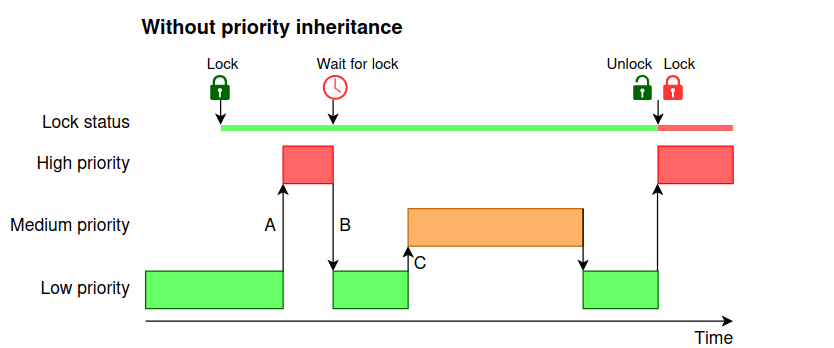

Now that we have priorities up and running, there’s a still small issue. MOP3 has a mutex API, allowing processes to synchronize and enter critical sections exclusively, which is essential to writing good multithreaded code.

When one process owns a mutex, other processes that try to hold it too, fail and have to be put to sleep mode until they can receive the mutex. This is so that a process doesn’t spin mindlessly in place (there’s place and time for spinlocks though, but not here and not today).

Mutexes don’t play nice with priorities, because they can actually reverse them!

The core issue is that a higher priority task is blocked by a lower priority task.

This is quite complicated to explain via text, so I’ll leave this diagram here:

Coding it up

Our mutex struct is quite simple:

struct proc_mutex {

struct proc_resource* resource;

bool locked;

struct proc_suspension_q suspension_q;

struct proc* owner;

};We don’t change anything here.

void

proc_mutex_lock(struct proc* proc, struct proc_mutex* mutex,

struct reschedule_ctx* rctx) {

if (!mutex->locked || mutex->owner == proc) {

mutex->locked = true;

mutex->owner = proc;

return;

}

/* If our priority is better, boost the owner. We need to reschedule, so that

* the owner runs with new priority.

*/

if (proc->dynamic_priority > mutex->owner->dynamic_priority) {

mutex->owner->dynamic_priority = proc->dynamic_priority;

rctx_insert_cpu(rctx, mutex->owner->cpu);

}

proc_sq_suspend(proc, &mutex->suspension_q, rctx, NULL, NULL);

}void

proc_mutex_unlock(struct proc* proc, struct proc_mutex* mutex,

struct reschedule_ctx* rctx) {

if (mutex->owner != proc) {

return;

}

/* If we aged, while holding the mutex, bring the priority back down */

if (proc->dynamic_priority > proc->priority) {

proc->dynamic_priority = proc->priority;

rctx_insert_cpu(rctx, proc->cpu);

}

struct list_node_link* node = mutex->suspension_q.proc_list;

if (node) {

struct proc_sq_entry* sq_entry =

list_entry(node, struct proc_sq_entry, sq_link);

struct proc* resumed_proc = sq_entry->proc;

mutex->owner = resumed_proc;

mutex->locked = true;

proc_sq_resume(resumed_proc, sq_entry, rctx, 0);

return;

}

mutex->locked = false;

mutex->owner = NULL;

}void

proc_cleanup_resource_mutex(struct proc_resource* resource,

struct reschedule_ctx* rctx) {

struct proc_mutex* mutex = &resource->u.mutex;

/* if the owner has aged, bring back it's priority */

if (mutex->owner != NULL && mutex->locked) {

if (mutex->owner->dynamic_priority > mutex->owner->priority) {

mutex->owner->dynamic_priority = mutex->owner->priority;

rctx_insert_cpu(rctx, mutex->owner->cpu);

}

}

while (mutex->suspension_q.proc_list != NULL) {

struct list_node_link* node = mutex->suspension_q.proc_list;

struct proc_sq_entry* sq_entry =

list_entry(node, struct proc_sq_entry, sq_link);

struct proc* suspended_proc = sq_entry->proc;

proc_sq_resume(suspended_proc, sq_entry, rctx, 0);

}

mutex->locked = false;

mutex->owner = NULL;

}